在本次实验中,使用人造数据集为:

$$y=0.05\sum_{i=1}^{d} 0.01x_i + \epsilon ,其中\epsilon \sim N(0,0.01^2)$$

import torch

from torch import nn

from d2l import torch as d2l

# 生成数据集

n_train, n_test, num_inputs, batch_size = 20, 100, 200, 5

true_w, true_b = torch.ones((num_inputs, 1)) * 0.01, 0.05

train_data = d2l.synthetic_data(true_w, true_b, n_train) # 合成训练数据

train_iter = d2l.load_array(train_data, batch_size)

test_data = d2l.synthetic_data(true_w, true_b, n_test) # 合成测试数据

test_iter = d2l.load_array(test_data, batch_size, is_train=False)

# 初始化模型参数

def init_params():

w = torch.normal(0, 1, (num_inputs, 1), requires_grad=True)

b = torch.zeros(1, requires_grad=True)

return [w, b]

# 定义L2范数惩罚

def l2_penalty(w):

return torch.sum(w.pow(2)) / 2

# 定义训练代码实现

def train(lambd):

w, b = init_params()

net, loss = lambda X: d2l.linreg(X, w, b), d2l.squared_loss

num_epochs, lr = 100, 0.003

animator = d2l.Animator(xlabel='epochs', ylabel='loss', yscale='log', xlim=[5, num_epochs], legend=['train', 'test'])

for epoch in range(num_epochs):

for X, y in train_iter:

# 增加了L2范数惩罚项

# 广播机制使得l2_penalty(w)成为一个长度为batch_size的向量

l = loss(net(X), y) + lambd * l2_penalty(w)

l.sum().backward()

d2l.sgd([w, b], lr, batch_size)

if (epoch + 1) % 5 == 0:

animator.add(epoch + 1, (d2l.evaluate_loss(net, train_iter, loss),

d2l.evaluate_loss(net, test_iter, loss)))

print('w的L2范数是:', torch.norm(w).item())

开始训练!

# 忽略正则化直接训练

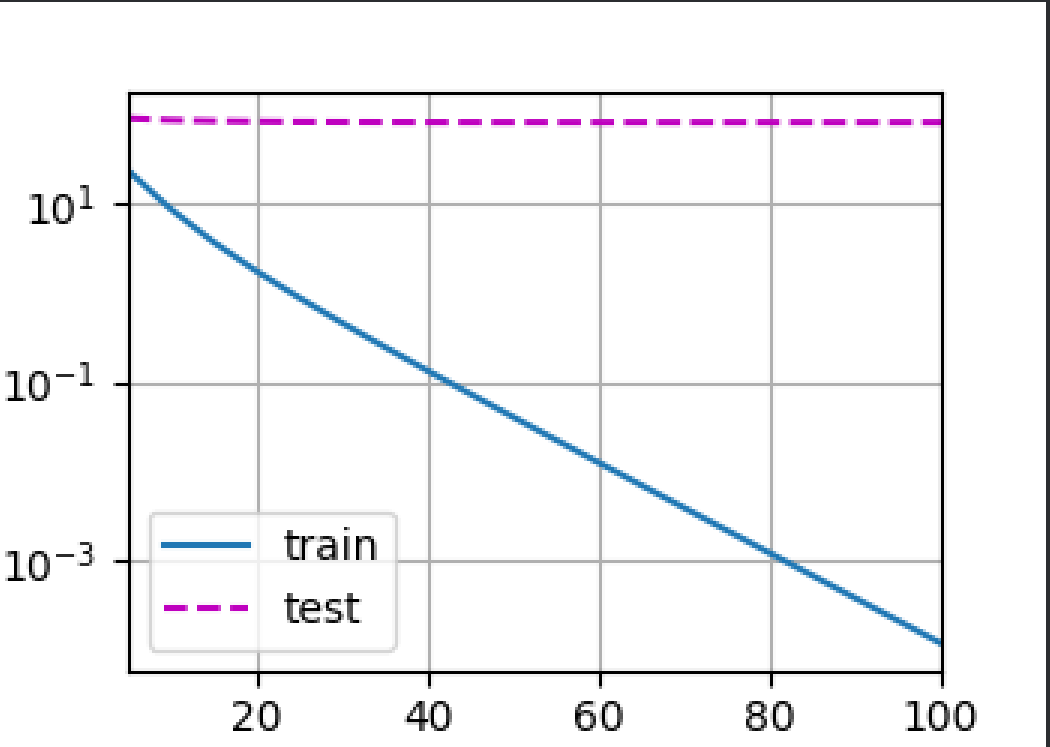

train(lambd=0)

d2l.plt.show()

可以看到,发生了毁灭性的overfitting

# 使用权重衰减

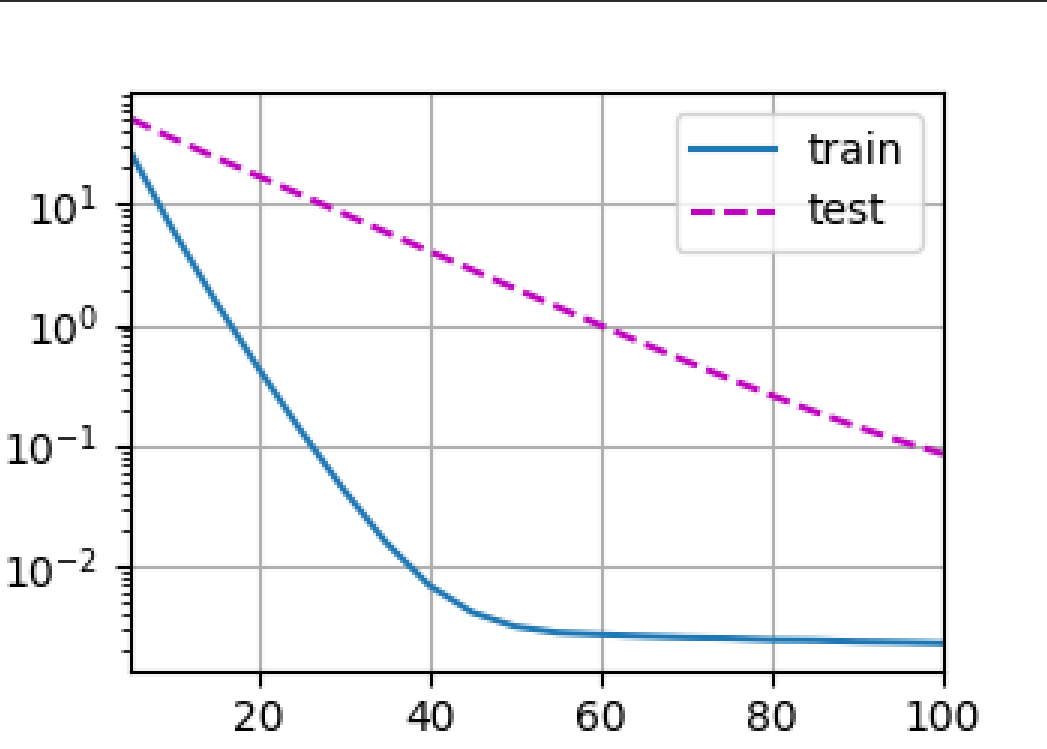

train(lambd=3)

d2l.plt.show()

正常拟合!

可以调整lambda的大小,发现调整到4的时候拟合效果更好!但是更大会发生欠拟合,要适当调整超参数