标准化后,生成的小批量的均值为0,单位方差为1,可以加快收敛速度

import torch

from torch import nn

from d2l import torch as d2l

def batch_norm(X, gamma, beta, moving_mean, moving_var, eps, momentum):

# momentum is designed to update moving_mean moving_var

# 通过is_grad_enabled方法来判断当前模式是训练模式还是预测模式

if not torch.is_grad_enabled():

# 如果在预测模式下,直接用传入的移动平均所得的均值和方差

X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)

else:

assert len(X.shape) in (2, 4)

if len(X.shape) == 2:

# 使用全连接层的情况,计算特征维上的均值和方差

mean = X.mean(dim=0)

var = ((X - mean) ** 2).mean(dim=0)

else:

# 使用二维卷积层的情况,计算通道维上(axis=1)的均值和方差

# 这里我们需要保持X的形状以便后面可以做广播运算

mean = X.mean(dim=(0, 2, 3), keepdim=True)

var = ((X - mean) ** 2).mean(dim=(0, 2, 3), keepdim=True)

X_hat = (X - mean) / torch.sqrt(var + eps)

moving_mean = momentum * moving_mean + (1 - momentum) * mean

moving_var = momentum * moving_var + (1 - momentum) * var

Y = gamma * X_hat + beta

return Y, moving_mean.data, moving_var.data

# 创建BatchNorm层

class BatchNorm(nn.Module):

# num_features: 全连接层的输出数量或卷积层的输出通道数

# num_dims: 2表示完全连接层,4表示卷积层

def __init__(self, num_features, num_dims):

super().__init__()

if num_dims == 2:

shape = (1, num_features)

else:

shape = (1, num_features, 1, 1)

# 参与求梯度和迭代的拉伸参数和偏移参数,分别初始化为1和0

self.gamma = nn.Parameter(torch.ones(shape))

self.beta = nn.Parameter(torch.zeros(shape))

# 非模型参数的变量初始化为0和1

self.moving_mean = torch.zeros(shape)

self.moving_var = torch.ones(shape)

def forward(self, X):

# 如果X不在内存上,则复制moving_mean和moving_var到X所在的显存上

if self.moving_mean.device != X.device:

self.moving_mean = self.moving_mean.to(X.device)

self.moving_var = self.moving_var.to(X.device)

# 保存更新过的moving_mean和moving_var

Y, self.moving_mean, self.moving_var = batch_norm(

X, self.gamma, self.beta, self.moving_mean, self.moving_var,

eps=1e-5, momentum=0.9)

return Y

# 应用BatchNorm于LeNet模型

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5, padding=2), BatchNorm(6, 4),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), BatchNorm(16, 4),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Flatten(),

nn.Linear(16 * 5 * 5, 120), BatchNorm(120, 2), nn.Dropout(0.5),

nn.ReLU(),

nn.Linear(120, 84), BatchNorm(84, 2), nn.Dropout(0.5),

nn.ReLU(),

nn.Linear(84, 10))

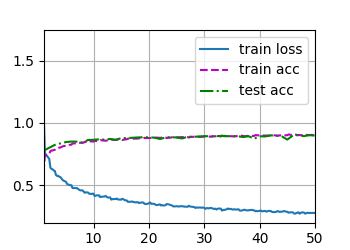

lr, num_epochs, batch_size = 0.05, 50, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

d2l.plt.show()

Output

loss 0.277, train acc 0.904, test acc 0.898

39096.3 examples/sec on cuda:0

不加

dropout会出现明显的overfitting应试教育刷题太多脑子僵化了,bushi哈哈哈

😂

话说看你笔记受到启发,这种记录精华核心部分的记录形式不错