import torch

from torch import nn

from d2l import torch as d2l

net = nn.Sequential(

# 这里使用一个11x11的更大窗口来捕获对象

# 步幅为4,以减少输出的高度和宽度

# 输出通道数远大于LeNet

nn.Conv2d(1, 96, kernel_size=11, stride=4, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 减小卷积窗口,用填充为2来使得输入与输出的高和宽一致,且增大输出通道数

nn.Conv2d(96, 256, kernel_size=5, padding=2), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 使用3个连续的卷积层和较小的卷积窗口

# 除了最后的卷积层,输出通道数进一步增加

# 在前两个卷积层之后,池化层不用于减少输入的高度和宽度

nn.Conv2d(256, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 256, kernel_size=3, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Flatten(),

# 这里全连接层的输出数量是LeNet的好几倍,使用暂退层来缓解过拟合

nn.Linear(6400, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

nn.Linear(4096, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

# 最后是输出层。因为这里使用的是Fashion-MNIST, 所以类别数为10,而非论文中的1000

nn.Linear(4096, 10),

)

batch_size = 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

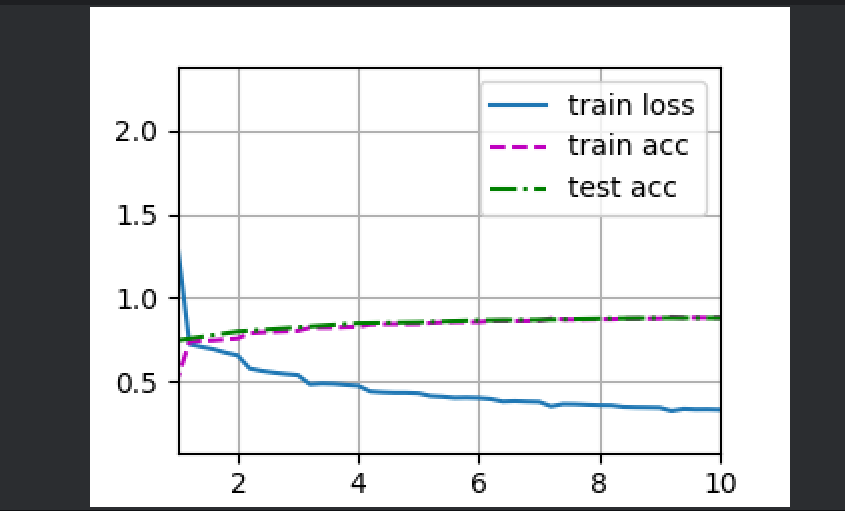

# 训练AlexNet

lr, num_epochs = 0.01, 10

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

d2l.plt.show()

Output:

loss 0.329, train acc 0.880, test acc 0.878

1216.9 examples/sec on cuda:0