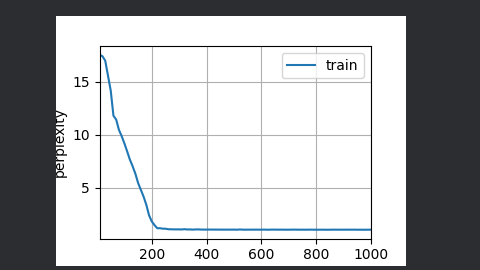

其实就是将h层加深

import torch

from torch import nn

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

vocab_size, num_hiddens, num_layers = len(vocab), 256, 2

num_inputs = vocab_size

device = d2l.try_gpu()

lstm_layer = nn.LSTM(num_inputs, num_hiddens, num_layers)

model = d2l.RNNModel(lstm_layer, vocab_size)

model = model.to(device)

num_epochs, lr = 1000, 2

d2l.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

d2l.plt.show()

perplexity 1.0, 253085.8 tokens/sec on cuda:0

time traveller for so it will be convenient to speak of himwas e

traveller with a slight accession ofcheerfulness really thi